Leaf-spine is the default. But it’s not always the right answer.

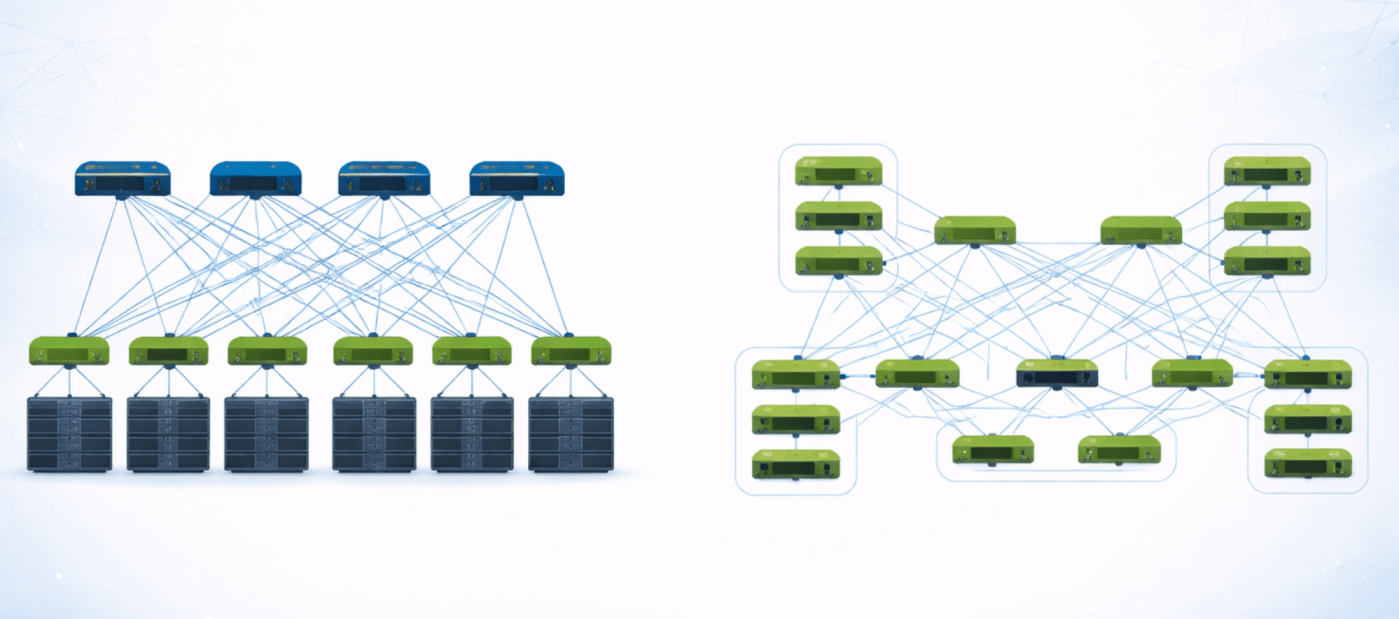

In modern data center networking, the leaf-spine Clos fabric has become the default architecture for good reason. It’s predictable, scalable, and aligns well with familiar Ethernet-based designs and operational models. But as workloads evolve, particularly with the rise of large-scale AI and distributed systems, alternative topologies like butterfly fabrics are getting renewed attention. Both architectures aim to deliver high bandwidth, low latency, and scale, but they approach the problem in fundamentally different ways.

Leaf-Spine Fabrics

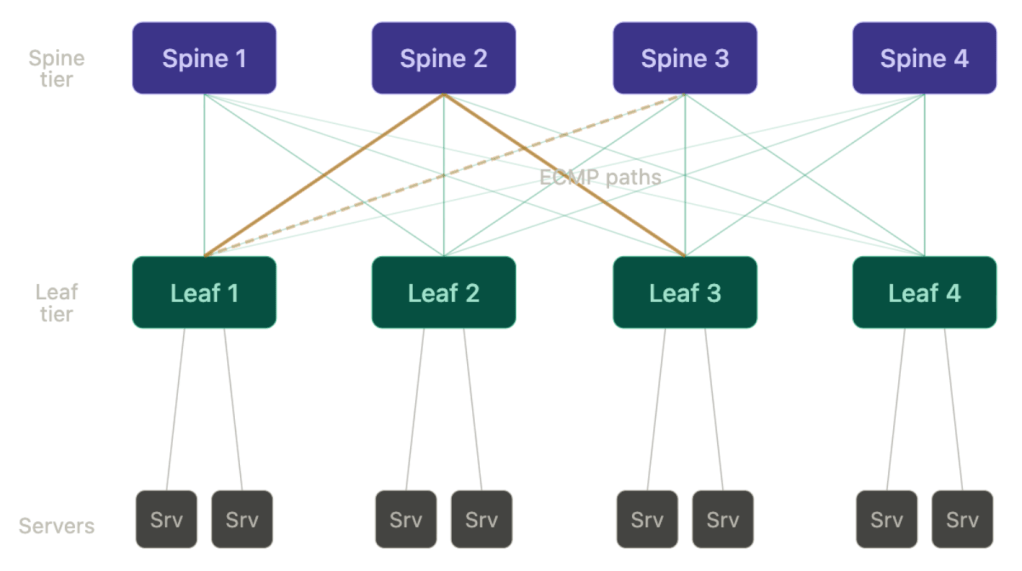

A leaf-spine fabric is a practical implementation of a multi-stage Clos network. At its simplest, it consists of two tiers including leaf switches that connect to servers, and spine switches that interconnect all the leaf switches. Every leaf connects to every spine, creating a fabric with uniform connectivity and consistent latency. Traffic between any two endpoints typically traverses a predictable path from leaf to spine to leaf.

This design has several architectural advantages. First, it’s relatively easy to operate. The topology is regular and repeatable, which makes it easy to deploy and scale. Need more capacity? Add more spine switches. Need more server ports? Add more leaf switches and scale the spine layer accordingly.

Second, it works seamlessly with standard routing protocols. Most deployments use BGP in the underlay with ECMP to distribute traffic across multiple equal-cost paths. Overlay technologies like VXLAN with EVPN provide segmentation and mobility on top. This model has effectively become the industry standard with a mature tooling ecosystem.

From a design perspective, Clos fabrics optimize for modularity and failure isolation. Each link and node failure is typically absorbed by ECMP, with traffic redistributed across remaining paths. This makes the network resilient and relatively easy to troubleshoot. However, this reliance on hashing introduces one of the key limitations – imperfect load balancing. In practice, traffic is distributed based on flow hashing, which can lead to uneven utilization, especially in environments dominated by large, long-lived flows. In AI training clusters, for example, synchronized communication patterns like all-reduce can create hotspots that ECMP doesn’t handle gracefully.

Butterfly Fabrics

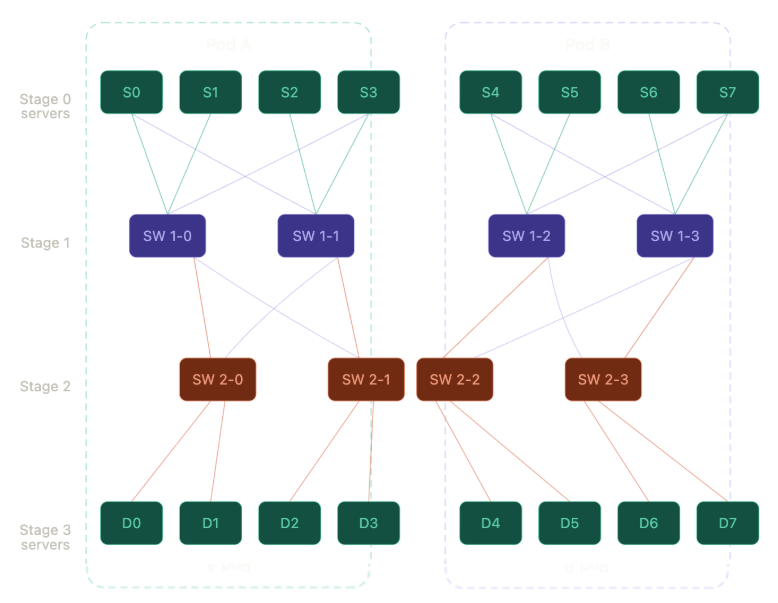

Butterfly fabrics take a more structured and deterministic approach to connectivity. A butterfly network is a multi-stage topology where each stage performs a specific transformation on the path toward the destination. Instead of every node connecting uniformly to a central spine layer, nodes are connected through a sequence of switching stages that systematically route traffic using predefined patterns. The topology is often described in terms of stages and dimensions, with each stage reducing the “distance” to the destination based on address transformations.

The key advantage of a butterfly fabric is deterministic pathing. Unlike Clos fabrics, which rely on probabilistic load balancing via hashing, butterfly networks can distribute traffic evenly across all available paths by design. This eliminates the risk of hash collisions and uneven link utilization. For workloads with highly structured communication patterns (again, AI training is the perfect example) this can result in significantly better performance and more predictable behavior.

Another benefit is scalability efficiency. Butterfly topologies can achieve full connectivity with a logarithmic number of stages, which helps maintain predictable, logarithmic path scaling as the network grows. This can lead to lower latency in very large-scale deployments. However, this efficiency comes with tradeoffs. Butterfly fabrics are more complex to design, particularly when mapping real-world workloads onto the topology. They also tend to be less flexible when traffic patterns are irregular or unpredictable. Generally speaking, they’re harder to operate, require tighter control, and there’s less tooling maturity in the industry.

Failure handling is another area where the two architectures differ significantly. Clos fabrics are inherently resilient due to their redundancy and the stateless nature of ECMP. When a link or node fails, traffic is simply rehashed across remaining paths with minimal coordination. Butterfly fabrics, on the other hand, often depend on specific paths determined by the topology. While redundancy can be built into the design, failure recovery may require more sophisticated mechanisms to maintain balanced traffic distribution without violating the deterministic routing model. Failure handling in butterfly-based systems often requires tighter coordination or higher-layer recovery mechanisms, particularly when deterministic pathing is coupled to the topology.

From an implementation standpoint, Clos fabrics have a clear advantage in today’s environments. They map cleanly onto commodity Ethernet hardware and standard routing protocols, which makes them accessible and cost-effective. The entire ecosystem, from silicon to software to operational tooling, is built around this model. Butterfly fabrics often require more specialized design considerations and, in some cases, tighter integration between hardware and software to fully take advantage of their benefits.

So where does each architecture make sense?

For general-purpose data centers like enterprise environments, cloud platforms, and most multi-tenant infrastructures, the leaf-spine Clos fabric is the best choice. It provides a balance of scalability, resilience, and operational simplicity that aligns well with diverse workloads and existing operational practices. It’s flexible enough to handle a wide range of traffic patterns and robust enough to absorb failures without complex coordination.

Butterfly fabrics, on the other hand, are better suited to environments with highly predictable and structured communication patterns. Large-scale AI training clusters, HPC environments, and other tightly coupled distributed systems can benefit from the deterministic load balancing and efficient scaling properties of butterfly topologies. Especially at scale, ECMP can break under specialized workloads. For example, incast and synchronized traffic patterns common in AI workloads expose the limitations of hash-based load balancing, which can lead to congestion and underutilized links.

In these cases, the complexity from adding additional mechanisms like PFC and ECN is justified by the performance gains, so it’s ultimately a choice between flexibility and determinism. This becomes especially important in RDMA-based environments, where lossless transport and congestion control mechanisms like PFC and ECN can have issues with uneven load distribution.

The broader lesson is that data center network topology is no longer a one-size-fits-all decision. The leaf-spine Clos fabric has certainly earned its place as the default, but it’s not universally optimal. As workloads become more specialized and performance-sensitive, network architects need to think more carefully about how network design interacts with application behavior. In some cases, that may mean sticking with the proven simplicity of a leaf-spine Clos. In others, it may mean exploring more structured approaches like butterfly fabrics to extract every ounce of performance from the infrastructure.

Put simply, Clos fabrics optimize for flexibility. Butterfly fabrics optimize for predictability. Clos is the right answer most of the time. Butterfly becomes relevant when the network is no longer general-purpose.

Thanks,

Phil

Leave a comment